Taking great photos is this generation’s equivalent to knowing five languages and having a double Masters in rocket science and engineering.

Which is to say, we’d all like to be able to claim it as part of our skill set.

One key to standout photos is memorability – will your snaps last in a viewer’s memory or be but a fleeting arrangement of dismissible pixels? We think Shakespeare asked that.

Anyway, recognizing this, a team at MIT’s Computer Science and Artificial Intelligence Laboratory have developed an algorithm that can predict how memorable or forgettable an image will be.

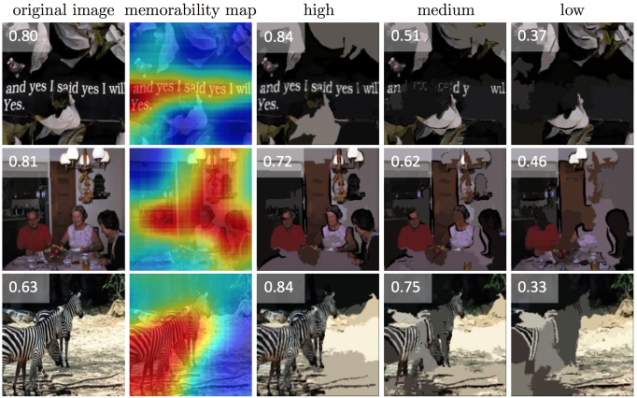

It essentially creates a heat map that indicates which part of the image carries the most impact. A photo of a marble countertop would likely yield a blue overtone, while a photo of your bestie dancing on the brunch table with a bottle of Champagne in each hand will be marked red. You know, action shots.

It’s equally valuable for bird’s eye view of legs in bed with a coffee photographers lifestyle bloggers as it is serious photojournalists and advertisers, and can be best explained in MIT’s own words:

Neural networks work to correlate data without any human guidance on what the underlying causes or correlations might be. They are organized in layers of processing units that each perform random computations on the data in succession. As the network receives more data, it readjusts to produce more accurate predictions.

The team fed its algorithm tens of thousands of images from several different datasets, including LaMem and the scene-oriented SUN andPlaces (all of which were developed at CSAIL). The images had each received a “memorability score” based on the ability of human subjects to remember them in online experiments.

The team then pitted its algorithm against human subjects by having the model predicting how memorable a group of people would find a new never-before-seen image. It performed 30 percent better than existing algorithms and was within a few percentage points of the average human performance.

Now go get those likes.

[ad_bb1]